Metrics Collector

Introduction

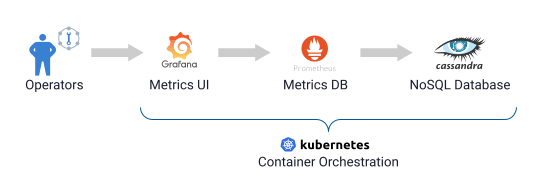

When running applications in Kubernetes, observability is key. K8ssandra easily connects to Prometheus and Grafana for storage and visualization of metrics associated with the Cassandra cluster.

Metrics Collector for Apache Cassandra (MCAC) is the key to providing useful metrics for K8ssandra users. MCAC is deployed to your Kubernetes environment by K8ssandra. If you haven’t already installed K8ssandra, see the install topics.

MCAC aggregates OS and Cassandra metrics along with diagnostic events to facilitate problem resolution and remediation. K8ssandra provides preconfigured Grafana dashboards to visualize the collected metrics.

About Metric Collector

-

Built on collectd, a popular, well-supported, open source metric collection agent. With over 90 plugins, you can tailor the solution to collect metrics most important to you and ship them to wherever you need.

-

Cassandra sends metrics and other structured events to collectd over a local Unix socket.

-

Fast and efficient. MCAC can track over 100k unique metric series per node. That is, metrics for hundreds of Cassandra tables.

-

Comes with extensive dashboards out of the box. The Cassandra dashboards let you aggregate latency accurately across all nodes, dc or rack, down to an individual table.

-

Design principles:

- Little or no performance impact to Cassandra

- Simple to deploy via the K8ssandra install, and self managed

- Collect all OS and Cassandra metrics by default

- Keep historical metrics on node for analysis

- Provide useful integration with Prometheus and Grafana

-

Supported versions of Apache Cassandra: 2.2+ (2.2.X, 3.0.X, 3.11.X, 4.0)

Note: Since v1.5.0, we introduced a new metrics endpoint and a Vector integration which aim at replacing MCAC.

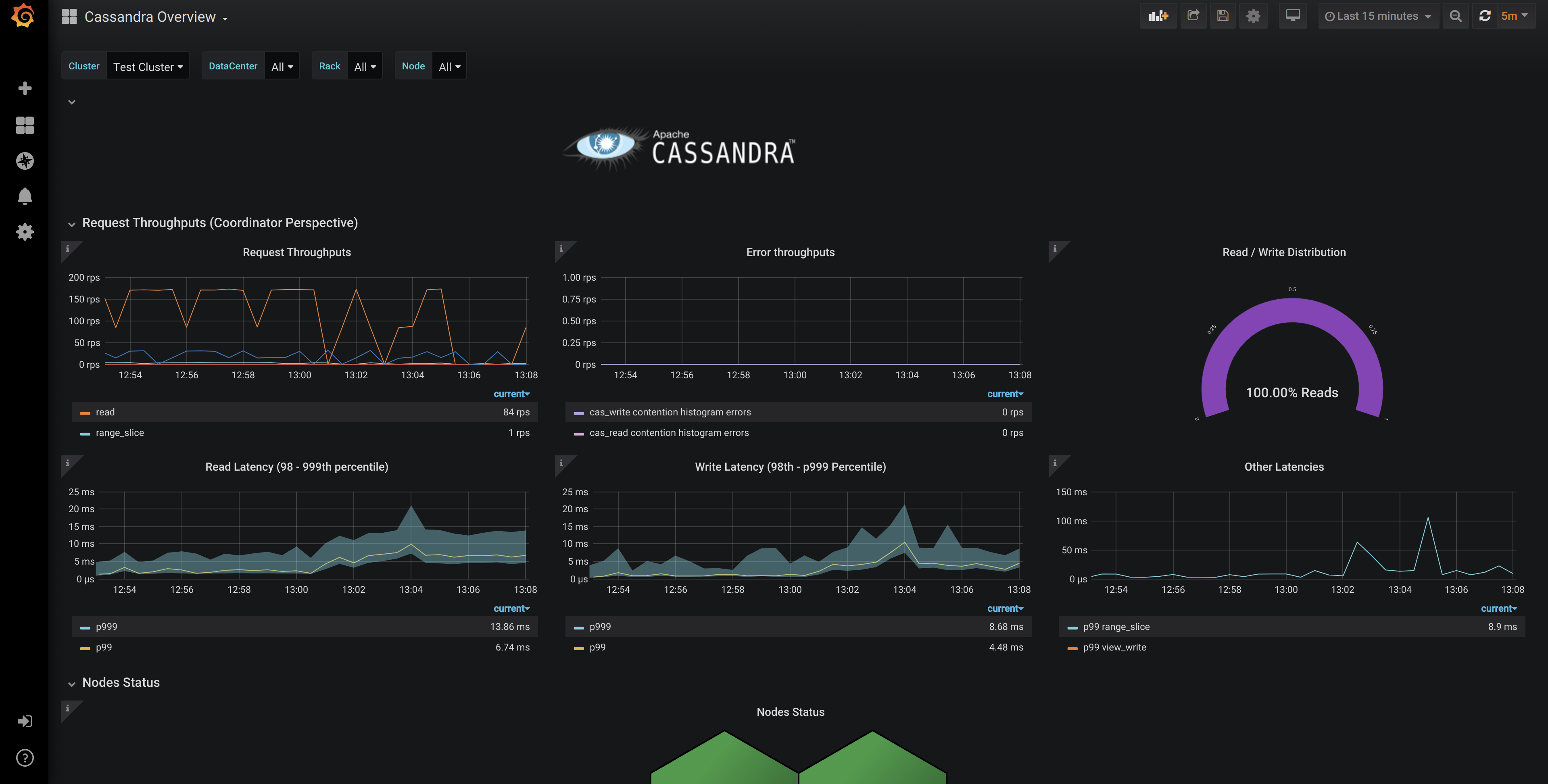

Sample overview metrics in Grafana

Cassandra node-level metrics are reported in the Prometheus format, covering everything from operations per second and latency, to compaction throughput and heap usage. Example:

Sample OS metrics in Grafana

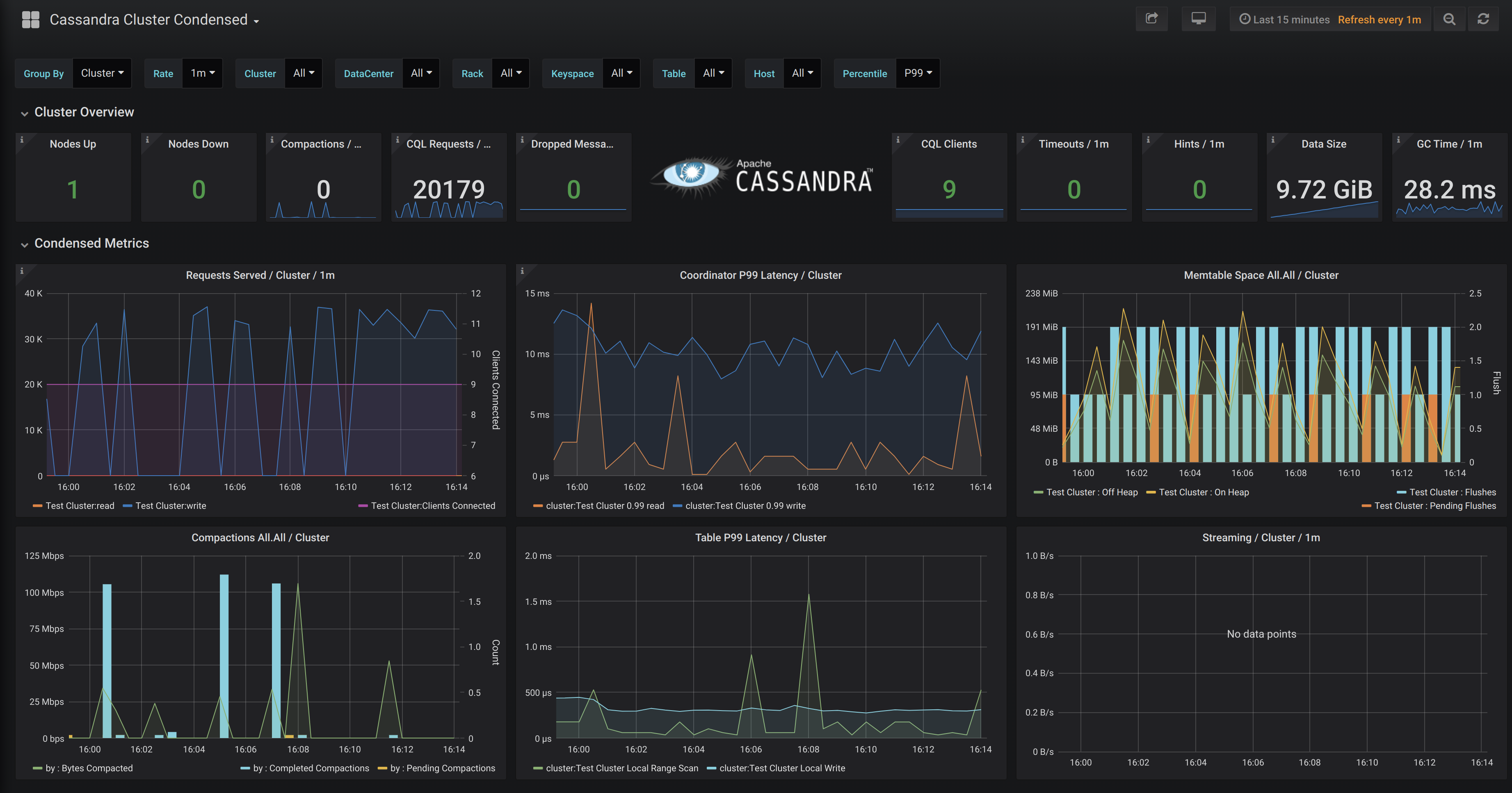

Sample cluster metrics in Grafana

Architecture details

Let’s walk through this architecture from left to right. We’ll provide links to the Kubernetes documentation so you can dig into those concepts more if you’d like to.

The Cassandra nodes in a K8ssandra-managed cluster are organized in one or more datacenters, each of which is composed of one or more racks. Each rack represents a failure domain with replicas being placed across multiple racks (if present). In Kubernetes, racks are represented as StatefulSets. (We’ll focus here on details of the Cassandra node related to monitoring.

Each Cassandra node is deployed as its own pod. The pod runs the Cassandra daemon in a Java VM. Each Apache Cassandra pod is configured with the DataStax Metrics Collector for Apache Cassandra, which is implemented as a Java agent running in that same VM. The Metrics Collector is configured to expose metrics on the standard Prometheus port (9103).

One or more Prometheus instances are deployed in another StatefulSet, with the default configuration starting with a single instance. Using a StatefulSet allows each Prometheus node to connect to a Persistent Volume (PV) for longer term storage. The default K8ssandra chart configuration does not use PVs. By default, metric data collected in the cluster is retained within Prometheus for 24 hours.

An instance of the Prometheus Operator is deployed using a Replica Set. The kube-prometheus-stack also defines several useful Kubernetes custom resources (CRDs) that the Prometheus Operator uses to manage Prometheus. One of these is the ServiceMonitor. K8ssandra uses ServiceMonitor resources, specifying labels selectors to indicate the Cassandra pods to connect to in each datacenter, and how to relabel each metric as it is stored in Prometheus. K8ssandra provides a ServiceMonitor for Stargate when it is enabled. Users may also configure ServiceMonitors to pull metrics from the various operators, but pre-configured instances are not provided at this time.

The AlertManager is an additional resource provided by kube-prometheus-stack that can be configured to specify thresholds for specific metrics that will trigger alerts. Users may enable, and configure, AlertManager through the values.yaml file. See the kube-prometheus-stack example for more information.

An instance of Grafana is deployed in a Replica Set. The GrafanaDataSource is yet another resource defined by kube-prometheus-stack, which is used to describe how to connect to the Prometheus service. Kubernetes config maps are used to populate GrafanaDashboard resources. These dashboards can be combined or customized.

Ingress or port forwarding can be used to expose access to the Prometheus and Grafana services external to the Kubernetes cluster.

FAQs

-

Where is the list of all Cassandra metrics?

The full list is located on Apache Cassandra docs site. The names are automatically changed from CamelCase to snake_case.

In the case of Prometheus the metrics are further renamed based on relabel config which live in the prometheus.yaml file in the MCAC repo.

-

How can I filter out metrics I don’t care about?

Please read the metrics-collector.yaml section in the MCAC GitHub repo on how to add filtering rules.

-

What is the datalog? And what is it for?

The datalog is a space limited JSON based structured log of metrics and events which are optionally kept on each node.

It can be useful to diagnose issues that come up with your cluster. If you wish to use the logs yourself, there’s a script included on the MCAC GitHub repo to parse these logs which can be analyzed or piped into jq.Alternatively, we offer free support for issues, and these logs can help our support engineers help diagnose your problem.

Next steps

- For details about viewing the metrics in Grafana dashboards provided by K8ssandra, see Monitor Cassandra.

- See the topics covering other components deployed by K8ssandra.

- For information on using other deployed components, see the Tasks topics.

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.